The Operating Model for AI-Powered Product Teams

The empowered, cross-functional product team is not an organizational experiment. The model defines a small group with a shared problem space, product decision authority, and accountability for outcomes. It is a deliberate correction to a failure mode that most product organizations have experienced directly: teams optimized for output rather than impact, where execution speed was the primary signal of performance and customer outcomes were measured after the fact, if at all.

That model holds. What does not hold is the operating infrastructure built around it.

The product development lifecycle as most organizations practice it today was designed around a specific constraint: discovery was slow, artifact generation was expensive, and validated options arrived at a pace that the review, planning, and governance structures could absorb. AI tooling has altered all three. Product leaders who have introduced AI tooling in the past year report the same pattern: more output, more coordination overhead, and decisions that are not noticeably faster despite the acceleration. The binding constraint is no longer discovery throughput. It is the organization's capacity to direct the output that accelerated discovery now produces.

What the AI era requires is not a new empowerment philosophy. It is a more precise operating model for teams that are already empowered. The framework presented here, Directed Autonomy, is an operating model that gives empowered product teams direct access to AI-accelerated discovery and generation capacity, while maintaining the constraint infrastructure, decision rights, and governance cadence required to convert that output into business outcomes rather than coordination overhead.

Where to focus based on your situation

If you are a Head of Product or VP assessing whether your operating model is equipped for AI-accelerated conditions, the Directed Autonomy Diagnostic provides the fastest entry point. The three operating principles provide the framework to address what it surfaces.

If you are a product director navigating this transition with an existing team, start with the role-by-role section on how AI reshapes the PDLC. It identifies where the compounding effect is most consequential for each function before the diagnostic helps locate the specific structural gaps.

If you want to understand what Directed Autonomy actually changes before reading further: it does not alter the goals of the empowered-team model. It specifies the operating infrastructure, the constraints, decision rights, and governance mechanisms, that makes those goals achievable when AI has permanently compressed the discovery pace the original model was calibrated for.

What the Empowered Team Model Was Built to Solve

Locating what needs to change requires clarity on what the existing model was designed to do.

The feature factory correction

The dominant failure mode in product organizations before the empowered-team model became standard was structural, not cultural. Product teams were organized around delivery capacity rather than problem ownership. PMs wrote specifications, engineers built to those specifications, and delivery metrics tracked whether the schedule held. The customer problem behind the specification was a planning-phase concern that rarely survived contact with execution.

The empowered-team model corrected this by assigning problem ownership to the team, not the specification. A squad given a customer problem and the authority to determine how to solve it will, over time, develop better solutions than a squad given a feature list and told to ship it. The structure reinforces the behavior: when teams own outcomes, they invest in understanding the problem rather than minimizing the cost of executing the specification.

Discovery as the rate-limiting activity

The PDLC that emerged from this model was designed around discovery as the most constrained phase of the work. Three original assumptions shaped every artifact, cadence, and governance ritual that followed:

Research synthesis was manual and time-intensive. Customer signal accumulated slowly through structured studies, support volume, and sales conversations.

Prototyping required specialized skill. Design output was a bottleneck by definition, which determined when and how designers entered the production sequence.

Validated options arrived at a manageable pace. Sprint cadences, planning cycles, and review structures were calibrated to absorb options at the rate discovery produced them.

AI tooling is compressing all three. The pace of validated option generation is no longer the constraint. The constraint is the organizational infrastructure for directing what that generation produces.

The cross-functional governance assumption

Placing PM, designer, and engineer in the same team with shared problem ownership was a governance decision before it was a cultural one. It reduced the escalation cost for decisions the team was qualified to make at squad level and created proximity between roles that needed to exchange judgment continuously rather than through handoffs.

The cross-functional squad is a decision rights architecture more than a collaboration philosophy. That architecture is sound. What it does not address is what happens when each function in the squad acquires AI tooling that simultaneously changes the volume and velocity of decisions the squad must make.

How AI Reshapes the PDLC Across Every Role

The AI impact on product teams is not limited to one phase of the development lifecycle. It is compounding across every function simultaneously, and the operating model has not caught up with that effect.

Role What AI changes What AI does not change Product Manager Speed and volume of customer signal synthesis Judgment on which problem is worth solving given strategic constraints Product Designer Artifact generation velocity; number of directions explorable per sprint Usability judgment, system coherence, tradeoff evaluation Engineering Lead Implementation speed from spec to committed code Reversibility assessment; platform-level governance decisions

Discovery and synthesis: the PM's changed input environment

Customer feedback synthesis tools, including AI-powered analysis across support tickets, NPS responses, sales call transcripts, and user interviews, have changed the volume and speed at which PMs can access customer signal. A synthesis that previously required a researcher several days can now be produced in hours. Pattern recognition across large datasets is no longer a bottleneck.

What AI cannot do is determine which customer problem is the right problem to solve given the organization's strategic constraints. It can surface that a significant percentage of churned customers cited feature gaps in a particular area. It cannot determine whether solving that gap serves the customer segment the organization has decided to concentrate capacity on.

That judgment, connecting customer signal to strategic constraint, remains the PM's accountability. AI-accelerated synthesis increases the volume of signal that judgment must process. Without explicit constraints established before synthesis begins, PMs default to reacting to volume rather than applying the selection logic that makes discovery productive.

Design and prototyping: faster artifacts, unchanged judgment requirements

Figma AI, v0, Lovable, and similar tools have compressed the design artifact production cycle in ways that are now visible in how design-forward organizations plan their sprints. A designer with access to these tools can produce multiple high-fidelity directions in the time a single wireframe previously required. A PM with moderate technical comfort can produce a working interface concept without design involvement.

The judgment behind those artifacts does not accelerate with the tooling. Specifically:

Which direction best navigates the usability tradeoffs available given the problem constraints

What system coherence implications the interaction model carries across the broader product surface

Whether the solution fits the product's established mental model or introduces friction that compounds over time

The artifact arrives faster. The reasoning that validates it still requires design expertise applied deliberately. Organizations that treat AI-generated design artifacts as evidence of design judgment, rather than as raw material for it, are removing the accountability signal from a phase of the PDLC that depends on it. The PM/designer collaboration model this produces, and the constraint infrastructure it requires, is addressed in full in How the PM and Product Designer Relationship Is Shifting With AI.

Engineering execution: AI coding and the reversibility question

Copilot, Claude Code, and similar tools have changed the pace at which engineers can produce working code from a defined specification. The implication most product organizations focus on is throughput: the same team can deliver more. The implication that matters more for the operating model is reversibility.

When AI-assisted engineering can produce implementation faster, the time between a bad product decision and a committed codebase compresses. The engineer's governance function in the AI-era PDLC is not primarily about code production. It is about identifying, before implementation begins:

Which decisions are structurally reversible at the squad level

Which carry platform-level implications that require cross-squad review

Which data model or surface changes affect other teams and require governance above the squad

An engineering lead who uses Claude Code to accelerate implementation is not reducing the need for reversibility assessment. The assessment is more consequential than before because the implementation can arrive faster than the organizational review that should precede it.

The cumulative effect across the PDLC

The reason AI-enabled product teams generate coordination overhead rather than proportional throughput gains is structural, not behavioral. AI tooling has simultaneously accelerated discovery synthesis, design prototyping, and engineering implementation. Each acceleration is individually significant. Together, they produce a PDLC where the pace of decisions requiring human judgment has increased across every function at the same time.

The governance infrastructure designed to absorb those decisions, including sprint cadences, product review forums, planning cycles, and decision escalation paths, was calibrated for a slower rate of arrival. The tooling is not the cause of the overhead. The unchanged governance cadence is.

Directed Autonomy: The Operating Model for AI-Powered Teams

Directed Autonomy describes an operating model in which empowered product teams have direct access to organizational data, customer systems, and AI platforms that accelerate their decision-making capacity, with their constraints, decision rights, and outcomes explicitly defined and tracked at the organizational level.

Directed Autonomy is not total automation, which removes human judgment from the production sequence and relies on AI to determine direction. It is also not traditional empowerment without governance, which delegates problem ownership to teams without specifying the constraints those teams must operate within. The distinction from traditional empowerment frameworks is not in the goals: both aim for autonomous teams that own customer problems and deliver outcomes. The distinction is in the operating infrastructure: Directed Autonomy specifies what traditional empowerment left implicit, namely the constraints, decision rights, and governance mechanisms that make autonomous judgment produce business results rather than organizational noise.

The framework reflects a manufacturing-derived insight that applies directly to product organizations: the most effective systems are not the ones that automate the most steps. They are the ones that identify which steps require human judgment and design the system to route that judgment efficiently, while automating the steps that do not require it. In product development terms, AI handles the generation. Human judgment handles the selection, tradeoff documentation, and reversibility assessment.

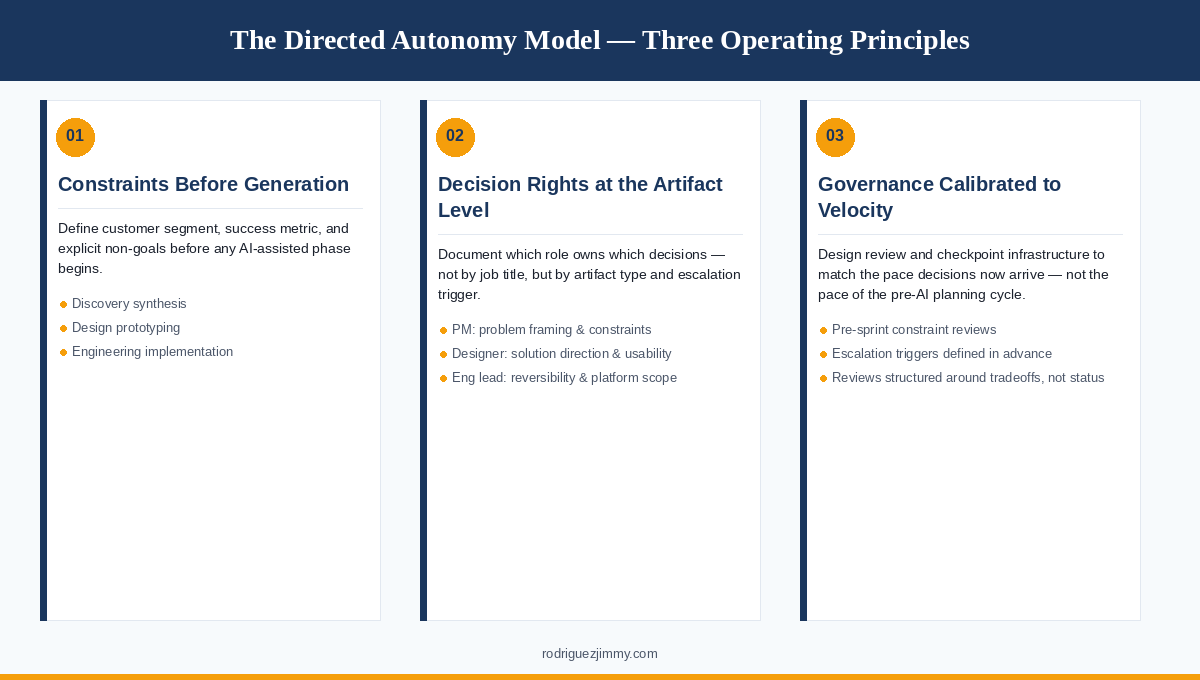

Three operating principles define the model.

Principle 1: Constraints before generation

Every AI-accelerated phase of the PDLC requires explicit constraints documented before that phase begins. Three phases are most affected:

Discovery synthesis: Customer segment, primary success metric, and explicit non-goals defined before AI synthesis begins.

Design prototyping: Tradeoffs the organization has already committed to accepting documented before a Figma AI or v0 sprint starts.

Engineering implementation: Reversibility classification and cross-squad dependency review completed before AI-assisted coding accelerates past the governance checkpoint.

Without these pre-generation inputs, AI acceleration produces volume. With them, it produces selectable options. This is the infrastructure distinction that most product strategies fail to establish before teams begin building.

Principle 2: Decision rights assigned at role and artifact level

Directed Autonomy requires explicit documentation of which decisions belong to which role in the squad, at the artifact level rather than at the job title level:

Problem framing and constraint documentation: PM ownership, completed before any generation phase begins.

Solution direction and usability judgment: Designer ownership. AI generates options; the designer evaluates and selects based on usability, system coherence, and fit.

Reversibility assessment and platform implications: Engineering leadership ownership, triggered before implementation commits.

Decisions that span these boundaries require a named joint owner and a defined escalation path. When AI tools allow multiple roles to produce the same artifact type, decision authority cannot be inferred from artifact ownership. It must be documented.

Principle 3: Governance calibrated to discovery velocity, not planning cycle timing

The review and checkpoint infrastructure in the PDLC should be designed to match the pace at which decisions requiring human judgment now arrive, not the pace at which they arrived before AI tooling was introduced. This does not mean more meetings. It means governance mechanisms defined once and applied automatically:

Pre-sprint constraint reviews before any AI-assisted phase begins

Artifact-level ownership documented at the start of each sprint, not negotiated mid-sprint

Escalation triggers specified in advance so individual decisions do not require individual meetings to resolve

A well-structured product review forum operating on this logic handles AI-accelerated teams more effectively than a more frequent review cadence operating on the original model.

The Directed Autonomy Diagnostic

The questions below are designed for product directors and heads of product assessing whether their operating model is equipped for AI-accelerated conditions. Answer each with Yes, Partial, or Not Yet.

The Infrastructure That Separates Leverage from Volume

The empowered-team model does not fail under AI pressure because its principles are wrong. Autonomous, cross-functional squads that own customer problems and have the authority to make product decisions remain the most effective organizational unit for product development. What fails is the governance infrastructure that makes empowered decision-making function: that infrastructure was calibrated for a discovery pace that AI has permanently altered.

The product organization structure that supports Directed Autonomy requires three things established before AI tooling outpaces the capacity to govern it:

Platform ownership: clear PM accountability for the shared infrastructure AI-assisted engineering is now building against faster than before

Cross-squad decision infrastructure: defined escalation paths for decisions that span squad boundaries, triggered automatically rather than discovered in conflict

Portfolio-level constraint governance: strategic constraints maintained and distributed at the organizational level, so squads are not reconstructing them independently for each sprint

Directed Autonomy describes that operating model. The organizations that use AI as genuine leverage, rather than as a source of expanded surface area and coordination overhead, are the ones that recognized the operating model itself as the design problem, identified which original assumptions no longer held, and rebuilt the constraint infrastructure before the pace of generation made the absence visible.

If the diagnostic above surfaces gaps that are structural rather than operational, that is usually an accurate read. The work to close them is not a training program or a tooling upgrade. It is a governance redesign, and it is most effective when it precedes the next wave of AI adoption rather than following it.