How Product Leaders Make Prioritization Decisions Under Uncertainty

Most prioritization problems are not actually prioritization problems.

They are framing problems. The team has assembled a list of things it could build, applied a scoring model, and produced a ranked output. Then leadership overrides the top item, or the PM revises the scores until the preferred answer surfaces, or a new request arrives from sales and the entire exercise starts over.

The framework did not fail. The underlying issue was not ranking accuracy. It was unresolved assumptions about strategic constraints, customer segment focus, and acceptable tradeoffs, and no scoring model resolves those.

Product leaders who make good prioritization decisions under uncertainty are not using better frameworks. They are operating with a different model of what prioritization actually requires: one that starts with constraints rather than options, separates decision ownership from decision quality, and treats uncertainty as a structural input rather than a problem to eliminate before committing.

Why Frameworks Produce False Confidence

RICE, ICE, weighted scoring, opportunity sizing: these tools are useful for one narrow purpose. They make implicit assumptions explicit within a set of options that are already reasonably defined. They force teams to articulate reach, impact, and confidence estimates, which is valuable. The act of scoring surfaces disagreements that would otherwise stay hidden.

What they cannot do is resolve the harder questions that precede the ranking:

Are these the right options to be evaluating at all?

Are we solving the right problem for the right segment?

What assumptions are embedded in these estimates, and how would the ranking change if those assumptions are wrong?

Which items on this list reflect genuine customer demand versus internal advocacy?

A RICE score is only as reliable as the inputs. When reach estimates come from gut feel, impact scores reflect enthusiasm rather than evidence, and confidence ratings are compressed toward the middle to avoid conflict, the output looks precise and is not.

Scoring models persist because they create procedural legitimacy. When a ranked list is produced through a visible framework, disagreement becomes harder to express. The output appears objective even when the inputs remain subjective. The framework rewards visible structure over real clarity, which is why it survives even when it repeatedly fails to improve decisions.

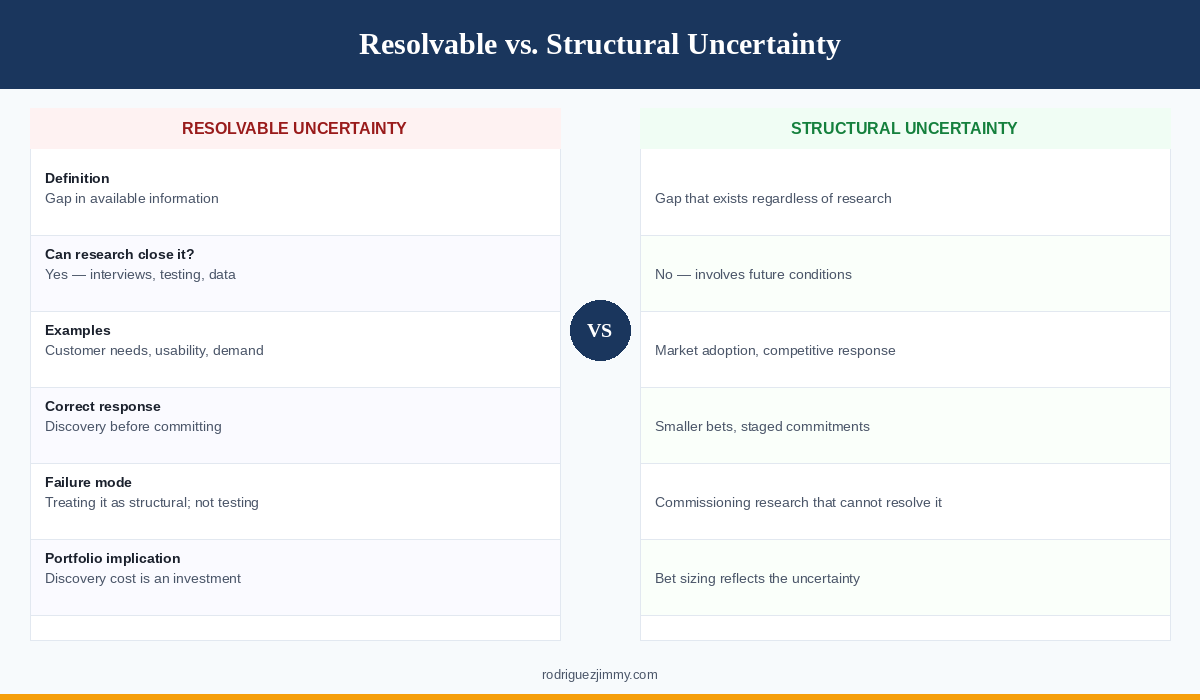

The two types of uncertainty product leaders actually face

Uncertainty in prioritization comes in two distinct forms that require different responses.

Resolvable uncertainty is a gap in information that could be closed before a commitment is made: customer interviews, prototype testing, data analysis, or structured discovery. Teams that treat resolvable uncertainty as permanent operate defensively, deferring decisions indefinitely or building based on assumptions they never tested because testing felt slow.

Structural uncertainty exists regardless of how much research is done. It involves predicting market behavior, customer adoption, or competitive response in conditions that do not yet exist. No amount of discovery eliminates it.

Structural uncertainty should change investment sizing. When outcomes cannot be validated through research, capacity allocation should reflect that uncertainty through smaller initial bets and staged commitments. Managing structural uncertainty is a portfolio design problem, not a discovery problem.

Conflating these two types produces predictable failures. Teams commission research to resolve structural uncertainty and are surprised when it does not. Or they treat resolvable uncertainty as structural and ship without testing assumptions they could have validated in two weeks.

The diagnostic question before committing to any prioritization decision: which type of uncertainty is actually present, and does the response address that type?

What Prioritization Actually Requires at the Leadership Level

Senior PMs and product leaders encounter prioritization differently than earlier-career PMs. The surface problem is the same: too many options, not enough capacity. But the underlying challenge has shifted.

Earlier in a PM career, the prioritization challenge is primarily about rigor: are the estimates defensible, is the reasoning documented, can you explain the tradeoffs clearly. These are real skills and they matter.

At the leadership level, the challenge is different. The estimates will always be incomplete. The data will always be ambiguous at the edges that matter most. Stakeholders will always have competing interpretations of the same signal. The question is no longer whether uncertainty can be eliminated before deciding. It is whether the team can make a call that is defensible given what is known, own the consequences if it is wrong, and create the conditions to learn fast enough to course-correct.

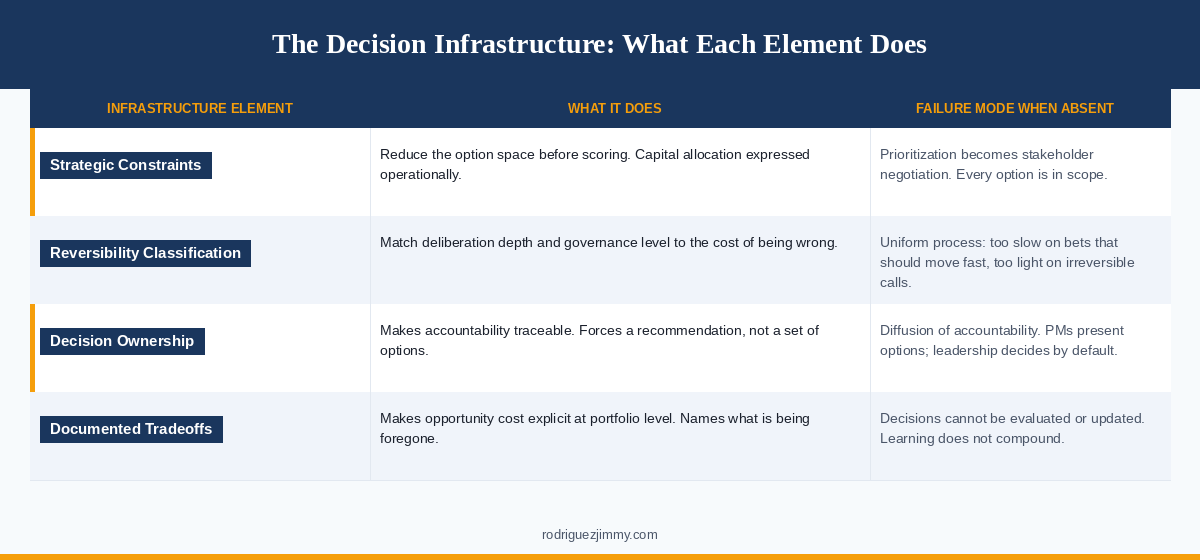

Strategic constraints as the primary filter

The most reliable way to prioritize under uncertainty is not to score more options more carefully. It is to reduce the option space before scoring begins, using strategic constraints that reflect what the organization has already decided.

Strategic constraints answer questions like: Which customer segment is the focus right now? What outcomes is the product responsible for moving this quarter? What is explicitly out of scope, regardless of how compelling the opportunity looks?

Strategic constraints are not tactical guardrails. They are capital allocation decisions expressed in operational form. When constraints are explicit, prioritization becomes an application of strategy rather than a negotiation of preferences. When they are absent, every option is theoretically in scope, every stakeholder preference is a legitimate input, and the PM's job shifts from making decisions to managing competing claims.

When these constraints are operational, meaning teams can use them to make autonomous decisions without escalation, prioritization becomes significantly more tractable. Many options that would otherwise require deliberation are already excluded. The remaining set is smaller, more coherent, and easier to evaluate.

The clearest signal of a broken prioritization system: the PM is spending most of their time managing stakeholder expectations rather than evaluating options against a shared direction. The root cause is almost always missing or unenforced strategic constraints, not individual PM capability.

The reversibility test

Under genuine uncertainty, reversibility is one of the most important inputs to how much deliberation a decision deserves.

Reversible decisions include experiments, phased rollouts, features that can be removed, and bets that can be unwound without significant technical or reputational cost. These deserve less deliberation and faster commitment. The cost of being wrong is bounded, and moving fast to learn from the outcome is more valuable than spending weeks building confidence in an estimate that will remain uncertain regardless.

Irreversible decisions include platform architecture choices, major customer commitments, structural changes to the product experience, and bets that foreclose other options. These deserve more deliberation and explicit documentation of the reasoning. The cost of being wrong is high or unbounded. Slowing down here is rational, not cautious.

The level at which a decision is made should correspond to its reversibility. Reversible bets belong closer to squad-level autonomy. Irreversible bets require broader review, explicit documentation, and governance appropriate to the stakes involved.

Most prioritization processes treat all decisions with the same level of scrutiny. The result is too much process on reversible bets and too little on irreversible ones: a team that moves slowly on things that should be fast experiments and under-deliberates on things that will be difficult to unwind.

Decision ownership versus decision quality

There is a distinction between making a high-quality decision and owning the decision you make. Both matter. They are not the same.

A high-quality decision uses the best available information, considers the relevant tradeoffs, and documents the reasoning clearly enough that it can be evaluated later. This is the mechanics of good decision-making.

Ownership means committing to the decision: not hedging against all possible outcomes, not building in so many qualifications that no one can tell what was actually decided, not revisiting the call every time new information arrives unless that information is genuinely material.

When decision ownership is ambiguous, PMs default to option presentation because escalation pathways are unclear. The result is diffusion of accountability rather than deliberate delegation. The pattern looks like thoroughness. It functions as a structural gap in how decision rights are assigned.

The language shift that marks the move from option-presenter to decision-owner is small but consequential: from "here are the options and their tradeoffs" to "I recommend X, accepting Y tradeoff, because Z, and here is what would change my mind."

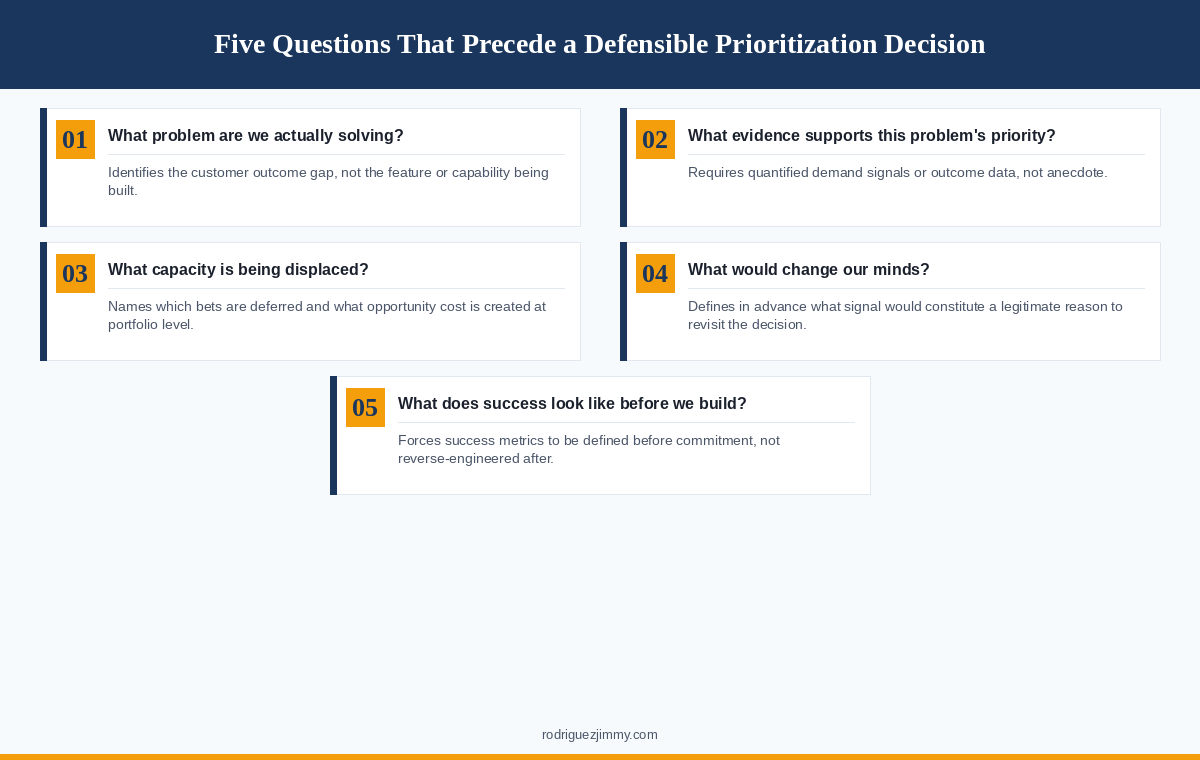

How Product Leaders Actually Make These Calls

The diagnostic process that precedes a good prioritization decision follows a predictable structure, even when the specific content varies. These are the five questions that separate a defensible call from a guess with documentation attached.

What problem are we actually solving?

Not what feature is being built or what capability is being added. The question is: what customer behavior or outcome is currently insufficient, and why does closing that gap matter now relative to other gaps?

Teams that skip this question build solutions to problems they have not fully defined, then measure success by whether the solution shipped rather than whether the problem moved.

What evidence supports the priority of this problem?

Not just that the problem exists. All product problems exist somewhere. The question is whether this problem is more important than the alternatives being deprioritized to address it.

The answer should produce quantified demand signals, customer segment data, or outcome evidence. A list of recent support tickets and one compelling customer story is not sufficient.

What engineering capacity is being displaced, and what opportunity cost does that create?

Every prioritization decision is a deprioritization decision. When an item is added to the roadmap, another item moves out or gets deferred. Making that tradeoff explicit at the portfolio level, naming which bets are being displaced and what outcomes are being foregone, is what separates a prioritization decision from a list of things someone wanted to build.

What would change our minds?

A decision made without a clear answer to this question cannot be properly evaluated or updated. Identifying in advance what new information would constitute a legitimate reason to revisit the call protects against two failure modes: premature pivoting in response to noise, and defensive rigidity that ignores genuine signals because changing course feels like failure.

What does success look like before we build it?

Success metrics defined after a feature ships are almost always reverse-engineered to fit the outcome. Defining them before commitment creates a clear learning checkpoint and forces the team to confront whether they actually know what they are trying to change.

Where AI Fits in Prioritization Under Uncertainty

AI tools have become genuinely useful in the parts of prioritization that involve pattern recognition across large inputs:

Synthesizing customer feedback at volume

Identifying recurring themes in support data

Modeling scenarios across a set of variables

Generating option sets the team might not have considered

These are real contributions to the earlier stages of the prioritization process, specifically the parts concerned with assembling information, surfacing patterns, and generating hypotheses worth evaluating.

AI also increases the number of viable-looking options available to a team. As option generation becomes easier and cheaper, constraint discipline becomes more important, not less. Without strong strategic constraints, prioritization becomes an evaluation problem that expands faster than capacity can absorb. The organizational response to AI-accelerated option generation should be tighter constraints, not more sophisticated scoring.

What AI does not do is resolve the judgment questions: whether those patterns matter, whether the tradeoffs are acceptable, or whether the team should commit. A model can identify that a particular problem appears frequently in customer feedback. It cannot determine whether that problem is strategically important relative to the alternatives, whether the segment experiencing it is the right focus, or whether solving it now is the right bet given what the organization knows.

A PM who cannot defend a prioritization decision without showing the AI output that produced it does not own the decision. The AI accelerated the information-gathering. The judgment is still required, and it still belongs to the person making the call.

A Prioritization Diagnostic for High-Uncertainty Decisions

When a prioritization decision involves genuine structural uncertainty and meaningful stakes, work through these questions before committing:

On the problem:

Is the customer problem clearly defined, or are we solving for a solution that already exists internally?

Which customer segment does this affect, and is that segment a current strategic priority?

What evidence quantifies the scope of this problem?

On the decision:

Is this a reversible or irreversible bet? Does the deliberation process and governance level match the reversibility?

What engineering capacity is being displaced, and what opportunity cost does that create at portfolio level?

Can the team articulate a specific recommendation rather than a set of options?

On the outcome:

What metrics would indicate this decision was correct within a defined timeframe?

What would change our minds after committing, and how would we recognize that signal?

If outcomes are negative, can the organization distinguish between flawed hypothesis selection and flawed execution?

This diagnostic is not a scoring model. It produces no ranked output. Its purpose is to surface gaps in reasoning before commitment rather than after, when the cost of identifying them is significantly higher.

The Infrastructure That Makes Prioritization Improvable

Prioritization quality does not improve through better frameworks. It improves when the decision infrastructure improves: explicit constraints that reduce the option space, documented tradeoffs that make decisions real and evaluable, calibrated reversibility that matches governance to stakes, and defined ownership that makes accountability traceable.

Product leaders who make consistently good prioritization decisions have usually made enough bad ones to understand what caused them. Not bad data, not insufficient research, but unclear constraints, unowned tradeoffs, and decisions that were never specific enough to be wrong in a useful way.

That infrastructure is what separates product organizations that get better at prioritization over time from those that keep running the same frameworks and getting surprised by the same outcomes.