How to Write a PRD: A Product Manager's Guide (+ Free Template)

The most common failure mode for a PRD is not that it is missing sections. It is that engineers read it once, nod, and then spend the next three weeks asking the PM the same questions the document was supposed to answer.

The reason is structural. The PRD described the solution before establishing the problem. It listed capabilities without explaining the context that makes them non-negotiable. It declared success as "launch" or "feature complete" rather than as a measurable change in user behavior. And somewhere at the bottom, there was a section called "Open Questions" that contained nothing — because admitting uncertainty felt like a signal that the work was not ready.

That document does not guide a build. It documents a decision that has already been made and asks engineering to fill in the gaps.

The result is predictable: engineers make scope calls the PM should have made, designers interpret capabilities in ways that no one anticipated, and the post-launch retrospective surfaces the same misalignments that a clearer document would have prevented.

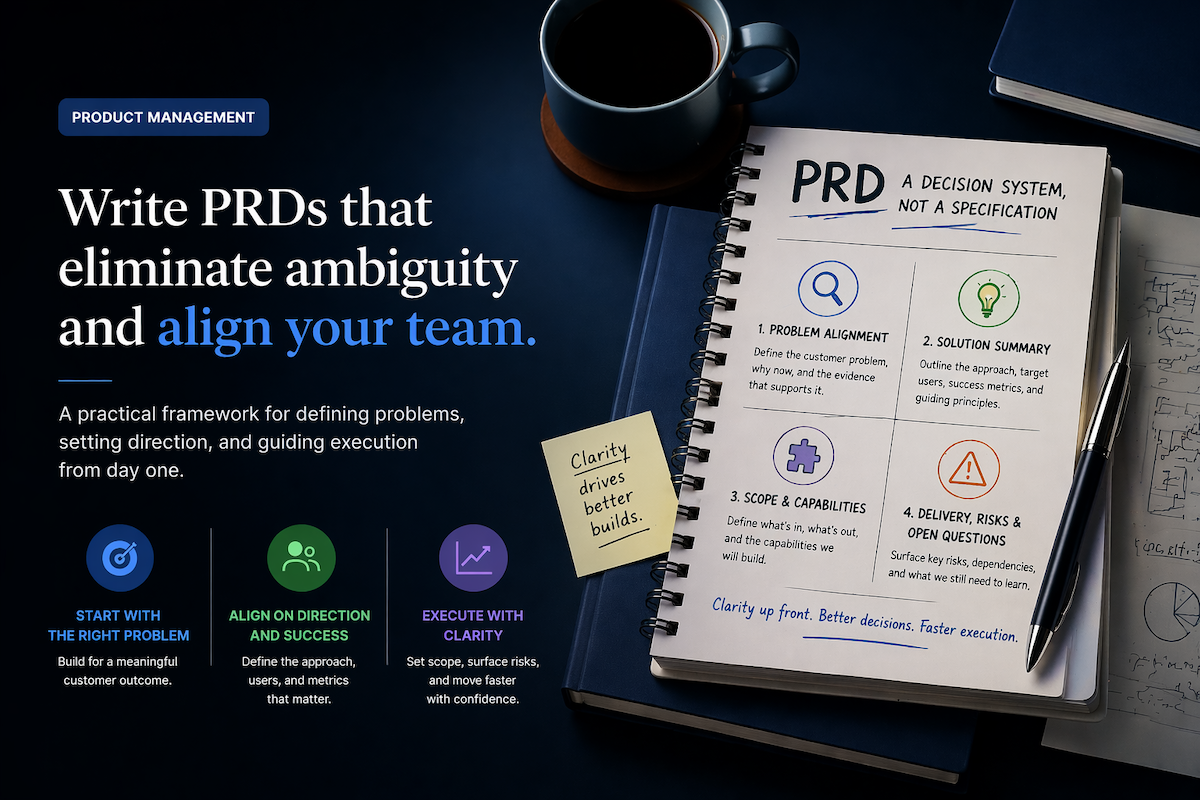

A PRD Is a Decision System, Not a Specification

The core misunderstanding that produces weak PRDs is treating them as documentation of what will be built rather than as a system for enabling decisions about how to build it.

What a PRD is not:

Not a specification. A spec prescribes how something should be built. When a team treats a PRD as a spec, they write implementation details into it, constrain design before designers have engaged with the problem, and produce a document engineers can follow but cannot apply judgment to.

Not a contract. Contracts fix terms. When a team treats a PRD as a contract, open questions get omitted (because uncertainty looks like incompleteness), scope gets locked prematurely (because the document becomes a commitment), and the document stops being useful the moment reality diverges from it — which is usually within the first two weeks of the build.

Not a pitch deck. A PRD does not exist to convince stakeholders the work is worth doing. By the time a PRD is written, that decision has already been made.

What a PRD actually is: a decision system. Each section exists to eliminate a specific category of ambiguity — about the problem, the users, the scope, the success criteria, the constraints — so that the build team can make judgment calls confidently, without routing every edge case back through the PM. If the team is still doing that mid-sprint, the PRD did not function as a decision system. It functioned as a record.

This distinction shapes everything about how the four sections below should be written.

How Each PRD Section Eliminates a Different Category of Ambiguity

Most PRD guides present a section list without explaining what each section is actually doing. The four sections that follow cover the natural sequence of decisions in a product build: establish the problem, define the solution direction, constrain the scope, and surface what is still unknown. Each section targets a specific type of ambiguity that the build team cannot resolve without it. When a section is weak, the team resolves that ambiguity themselves — usually inconsistently, and usually mid-sprint.

| # | Section | What it establishes | What goes wrong when it's weak |

|---|---|---|---|

| 01 |

Problem Alignment

(or Opportunity)

Why Now

Background & Evidence

|

The core problem and for whom — with timing rationale and supporting evidence | Engineering interprets scope loosely. Design solves the visible symptom. Every downstream decision compensates for the vague opening. |

| 02 |

Solution Summary

Target Users

Definition of Success

UX / Design Principles

|

The direction, who it's for, what success looks like, and how the product should behave | Each function optimizes for its own interpretation of the user. "Done" defaults to shipped. Design decisions are made without shared principles to resolve tradeoffs. |

| 03 |

Scope & Capabilities

Key Capabilities

In-Scope: Detailed User Stories

Out-of-Scope

|

What the system must enable, what is in, and — equally — what is explicitly out | Scope expands during execution because reasonable people fill ambiguity with what seems logical. Capabilities become prescriptive implementation details that constrain design unnecessarily. |

| 04 |

Delivery, Risks &

Open Questions

Release Plan & Milestones

Constraints & Assumptions

Open Questions & Risks

|

Timing, constraints, and what is still unknown — with a named owner for each open item | Hard questions get deferred and resurface during engineering implementation, when changing direction is most expensive. Constraints go unstated until they block the build. |

The four sections of the PRD template — with subsections and failure modes.

1. Problem Alignment (or Opportunity): Why Engineers End Up Making Scope Calls

The most common reason engineers end up making scope decisions that should have been made by the PM is not that engineers are overstepping. It is that the problem was not defined clearly enough for anyone to evaluate scope from. When Problem Alignment is vague, scope becomes a judgment call that defaults to whoever is building.

The section body is where the core customer problem or opportunity is stated directly — not in a subsection, but as the opening of the section itself. If it cannot be written in two or three sentences, the problem is not yet understood well enough to build from. That is not a failure — it is signal that discovery is incomplete, and building now will compound the ambiguity rather than resolve it.

Two subsections follow:

Why Now explains the timing rationale — what makes this the right moment to solve this problem rather than later. Without it, the team cannot evaluate whether this work should displace something else currently in progress. Roadmap dependencies, market shifts, and cost-of-delay analysis belong here.

Background & Evidence provides the data that transforms a problem statement from an opinion into a diagnosis: customer interview patterns, usage and retention data, support ticket volume, competitive context. A problem statement without evidence can be argued with. With evidence, it becomes the shared starting point the full team acts from.

2. Solution Summary: What Happens When Each Function Picks Its Own Target User

When the solution direction is left implicit, each function applies its own interpretation of who the product is for. Design optimizes for the persona it finds most compelling. Engineering builds for the use case that seemed most tractable. The PM discovers the mismatch at review rather than at kickoff — and by that point, the misalignment has already shaped implementation decisions that are expensive to reverse.

The section body is a short, high-level description of the proposed solution that maps clearly back to the problem statement. It describes the direction without prescribing implementation. If the solution cannot be explained in under sixty seconds in terms of what problem it addresses, it has drifted from the problem it was supposed to solve.

Three subsections add the specificity the build team needs to act without constant clarification:

Target Users names the primary segment the solution is for — and explicitly who it is not for. The exclusion is as important as the inclusion. Every capability that follows should be evaluable against the named target users. If a capability only makes sense for a different segment, this section surfaces that conflict before it becomes an execution problem.

Definition of Success defines what success looks like in measurable terms. Not "improve onboarding" but "increase activation rate from 34% to 45% within 60 days of launch." Not "reduce friction" but "reduce median time-to-first-action from 4.2 minutes to under 90 seconds." This is the subsection engineering uses to know when the work is done. A PRD without a measurable Definition of Success shifts that judgment to delivery velocity: done means shipped, regardless of whether anything improved.

UX / Design Principles lists three to five short, directive principles that constrain how the product should feel and behave. These are not aesthetic preferences — they are decision constraints. When a designer faces a tradeoff, the principles should resolve it without requiring the PM to be in the room.

3. Scope & Capabilities: Why Scope Expands During the Build

Scope does not expand because teams are careless. It expands because excluding something requires owning that exclusion explicitly, and that accountability is uncomfortable. When the exclusions are not named, every engineer fills the ambiguity with what seems logical to them — and those inferences diverge.

The section body is a short TL;DR defining what is in scope and what is explicitly out. The subsections carry the detail:

Key Capabilities (AI + Human Friendly) translates the solution direction into outcome-based capability statements — what the system must be able to do, written without UI or implementation details. Capabilities written at the implementation level ("there should be a dropdown with three options") prescribe a solution before the designer has evaluated whether it serves the goal. Capabilities written at the outcome level ("the user must be able to select their notification frequency without navigating to account settings") define the constraint and leave the design decision where it belongs. The test: can a designer and an engineer read this list independently and arrive at the same understanding of what the system must enable?

In-Scope: Detailed User Stories lists prioritized user stories using real personas and outcome-focused language. These give the team shared understanding of expected behavior without dictating implementation.

Out-of-Scope names what is intentionally excluded or deferred. This subsection is not optional. A PM who writes "out of scope: mobile app support" must be prepared to defend that decision. Many teams avoid that accountability and leave scope implicit. The cost accumulates during the build, not at kickoff.

4. Delivery, Risks & Open Questions: The Section That Gets Left Blank

The structural reason this section gets left empty is that surfacing open questions feels like an admission that the work is not ready. A blank Open Questions section looks complete. A full one looks uncertain. This incentive inverts the purpose of the section entirely.

Open questions do not disappear when they are omitted from the PRD. They resurface during engineering implementation, when changing direction is most expensive. The section exists to make uncertainty visible before the build starts — and to assign accountability for resolving it.

Release Plan & Milestones aligns the team on timing, phasing, and how outcomes will be evaluated post-launch. Outcome-driven milestones belong here, not just dates. A milestone of "ship the feature" tells the team when to stop building. A milestone of "reach 40% activation among new accounts within 30 days of launch" tells them whether the work succeeded.

Constraints & Assumptions lists the technical, legal, and resource constraints that shape the solution, alongside the assumptions that must hold true for the approach to remain valid. These are the boundaries that prevent teams — and AI prototyping tools — from generating solutions that look correct but are incompatible with the actual environment.

Open Questions & Risks documents what is still unknown, with a named owner and target resolution date for each item. A PRD that has no open questions usually means the hard questions were deferred, not resolved.

What Separates an Adequate PRD from One That Actually Works

The difference is not length or section completeness. It is whether the PM's reasoning is visible.

An adequate PRD states what was decided. A good PRD shows why — why this problem over others, why this scope boundary here and not there, why this success metric and not a different one. The reasoning matters because the team will encounter edge cases the PRD did not anticipate. When the reasoning is visible, the team can apply it. When it is not, they either interrupt the PM or make a judgment call without context.

The table below shows the same Definition of Success written two ways:

| Attribute | Adequate | Good |

|---|---|---|

| Example | "Success metric: increase user engagement with the dashboard." | "Increase the % of active users returning to the dashboard 3+ times/week, from 22% to 35%, within 90 days of launch." |

| Specificity | Direction only — no threshold or segment defined | Specific behavior, specific segment, specific threshold |

| Timeline | Not defined | 90 days post-launch |

| Measurement | Implied — no method stated | Retention cohort data, tracked in analytics |

| Reasoning visible | No — goal stated as a direction without context | Yes — tied to 3× 12-month retention rate for users who hit the threshold early |

| Engineering knows when done | No — "done" defaults to shipped | Yes — threshold and timeline are explicit |

Definition of Success: adequate vs. good — same section, different quality of reasoning.

The adequate version names a direction. The good version names a threshold, a timeline, and the business logic that makes the threshold meaningful. Engineering knows when the work succeeded. Design understands why the return behavior matters. The PM's reasoning travels with the document — and functions as a decision system even when the PM is not in the room.

Why AI Makes PRD Discipline More Important, Not Less

AI does not replace the thinking a PRD requires. It accelerates the drafting once the thinking is done — and it surfaces the cost of weak thinking faster than any human reviewer will.

The constraint that produces weak AI-assisted PRDs is the same one that produces weak PRDs without AI: the problem is not understood clearly enough to generate useful structure. When Problem Alignment is vague, an AI tool produces a plausible-looking document that lacks the specificity engineers need to act. When Key Capabilities are written as implementation details, AI prototyping tools generate constrained outputs that reflect the PM's prescriptions rather than the user's actual need.

As option generation becomes easier and cheaper, constraint discipline becomes more important, not less. The sections of the PRD that teams routinely weaken — Target Users, Definition of Success, Key Capabilities, Constraints — are precisely the inputs AI tools require to produce realistic outputs rather than plausible noise. A vague PRD and an AI tool produce a vague prototype, quickly. A precise PRD and an AI tool produce a working prototype the team can actually evaluate.

Four sections, all subsections named, optional guidance toggles under each one explaining what to include, what good looks like, and what pitfalls to avoid. New PMs can use it as a coach. Experienced PMs can ignore the scaffolding and fill in the sections directly. Built to work for human collaborators and AI prototyping tools.

Download the Free PRD Template →

Already writing PRDs regularly and want to draft them faster? The AI PRD Assistant turns rough notes into complete, structured PRDs — and pushes back when the problem definition is not specific enough to build from.

Five Ways PRDs Stop Working Before the Build Starts

| Mistake | What it looks like | What it costs |

|---|---|---|

|

Problem Alignment written as feature justification

Problem Alignment |

The problem statement starts from a solution already decided — built backward to justify it rather than diagnose it | The PRD cannot be challenged. Scope and priority calls are made before discovery is complete. |

|

Definition of Success that can't be measured

Solution Summary |

"Improve user satisfaction" — no threshold, no timeline, no measurement method defined | "Done" defaults to shipped. The team has no signal for whether the work actually succeeded. |

|

Out-of-Scope left blank

Scope & Capabilities |

Items stay in because removing them required owning and defending the exclusion | Scope expands during execution. Timeline stretches. Root cause: an Out-of-Scope subsection no one was willing to fill in. |

|

Key Capabilities as implementation details

Scope & Capabilities |

"Use a modal with a confirmation step" instead of what the system must enable | Designers receive a brief that limits their judgment before they've applied it. AI tools generate constrained outputs based on prescriptive inputs. |

|

Open Questions & Risks left blank

Delivery, Risks & Open Questions |

Hard questions are deferred rather than surfaced — section left empty because it felt like an admission of unreadiness | Questions resurface during engineering implementation, when they are most expensive to resolve. |

Five structural failure modes — each traced to a specific PRD section.

Problem Alignment written as feature justification. The section gets written backward — starting from a solution already decided and constructing a problem statement to justify it. The result is a PRD that cannot be challenged, because the question it is supposed to answer was answered before the document began.

A Definition of Success that cannot be measured. "Improve user satisfaction" or "increase product adoption" without a threshold, timeline, or measurement method are aspirations, not criteria. A build team cannot know when they have succeeded, which means done defaults to shipped.

Out-of-Scope left blank because excluding something felt risky. Scope does not expand through negligence. It expands because removing an item requires owning and defending the exclusion. When that accountability is uncomfortable, items stay in scope by default.

Key Capabilities written as implementation details. "Use a modal dialog with a confirmation step" is a design decision, not a capability. Capabilities describe what the system must enable, not how it should be built. When they prescribe implementation, designers receive a brief that limits their judgment before they have applied it.

Open Questions & Risks left blank. A PRD with nothing in this subsection is almost always a PRD where the hard questions were deferred rather than surfaced. They resurface during engineering implementation, when they are most expensive to answer.

Where PRD Quality Actually Comes From

A PRD improves product outcomes not by adding documentation, but by making the PM's reasoning visible enough that the team can apply it to situations the document never anticipated.

The four sections exist for this reason. Problem Alignment forces the diagnosis before the solution. Solution Summary forces an explicit choice about users, success, and design constraints before scope is negotiated. Scope & Capabilities forces the exclusions to be owned rather than left implicit. Delivery, Risks & Open Questions forces the hard questions to be named before the build — and assigns accountability for resolving them.

When any of those sections is weak, the decision system has a gap. The team fills it, usually inconsistently, usually under pressure, and usually in the direction of whatever reduces immediate friction rather than whatever serves the product outcome.

The template linked below provides the structure. The work of filling it in — the diagnosis, the exclusions, the evidence, the reasoning — belongs to the PM. That work does not get easier with a better template. It gets easier when the PM understands what each section is actually doing and why the sections that feel optional are the ones the team needs most.

It is usually a combination of unclear problem ownership, undefined success criteria, and a review process that approves documents without challenging the reasoning behind them. These are systems problems, not document problems.

I work with product teams as a Fractional CPO to build the decision infrastructure that makes PRDs work: clear problem ownership, defined success criteria, and review cadence that surfaces scope issues before they become execution problems.